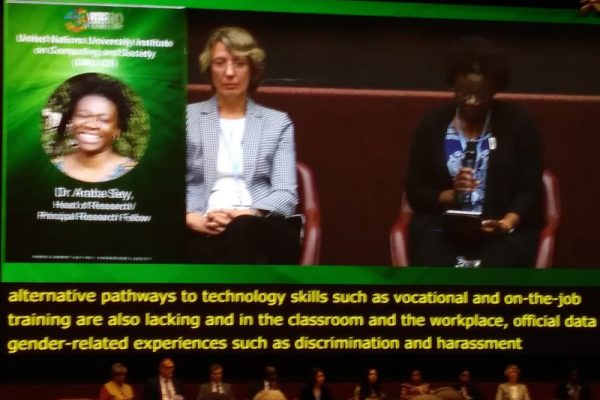

The WSIS Forum is an annual event organized by ITU in cooperation with the UN and other international bodies. This year, it marked it’s 10th anniversary, April 8-12. The theme of the Forum was “Information and Communication Technologies for achieving the Sustainable Development Goals”.

This year, the Forum included high-level policy sessions on the ethical dimensions of information and knowledge societies, as well as high-level strategic dialogue on ICTs for achieving the SDGs, as well as celebrating 10 Years of the WSIS Forum.

The WSIS Forum official outcome documents may be found here.

Ethical compensations of AI

Among the many panels we attended, the one on ethical compensations of AI stood out, given our interest in the ethical dimensions of AI. The panelists in the session on ethics identified 5 principles for AI systems that respect human rights and dignity: AI systems should respect human rights and fairness, should be transparent and explainable, robust and safe and secure, and accountable. Most importantly, it should promote inclusive growth and well-being.

AI should focus on the most vulnerable. This is where we find common ground across systems. People should focus more on AI and less on autonomous vehicles and their decision making.

Peter Paul Verbeek

The panelists agreed that there is an incredible learning curve in the policy domain, example through the early work at the OECD, and questioned the common challenges and common direction was, and where we want it all to end up.

Monique Morrow, President, VETRI Foundation, seemed pessimistic. She said that bots are creating their own languages. These technologies are used in private industries and can be perceived as intrusive. She gave examples of future crime: eg the Minority Report. She stressed that when we are thinking about weapons systems we have to look at where “that human” is going to be in the loop. We need technologies that are more inclusive.

Why ethics in AI?

Amandeep Singh Gill, Executive Director, Secretariat of the High-Level Panel on Digital Cooperation, ex officio, said that ethics have always played a role in ambiguous situations. There needs to be the commitment to human agency, so this has to be followed up with the commitment to global and multistakeholder collaboration. We also need to maximise the enabling role for the sustainable development goals (SDGs) as guardrails to prevent misuse and reduce the risk of social and individual harm. We must follow with a fanatical commitment to human agency and not leak it to the digital. There is a lack of awareness or presence of human relationships. We need a fanatical commitment to collaboration – cooperation for the digital age. AI itself can be an important tool in driving that collaboration.

Mei Lin Fung, co-Founder, People Centered Internet, said that AI is a lot of lines of code building bridges but there are no institutional and commercial guardrails to use them wisely, as seen in the example of the Boeing 737 that failed. AI is not magic. It is a human tool, just like fire that needs to be used with caution.

Sandy Parakelis, the Facebook whistleblower is a case in point. People’s data was being used unethically, and he had no recourse but to resign his position. We should not have places for tech people who are put in positions of not knowing where to go. We have to have these guardrails to protect them.

Salma Abbasi, Chairperson and CEO, The eWorldwide Group, talked about the educational side of things, she said there needs to be a kind of framework and policies and governments to be put into place. We are not exposed to ethics in AI until we become a graduate or an employee in an organisation, but by this point it is too late. This makes it more difficult to voice ethical concerns when exposed to commercial pressures.

Ethics is not about morals but about social constructs and put social justice and social responsibility in focus.

– Mei Ling

AI is not new. It is a continuation of other existing technologies. Go back to schools and academia. See if children discuss civic responsibilities and the human side and consequences of all work. To wait for them to graduate it is too late. An example of intrusive behavior of AI is that one time someone spoke of condoms and they had it brought to their home because Alexa heard them.

Humans have been so driven to optimize to do things better and more efficiently and have become so enamored with their tools that we have forgotten humans. AI should be complimentary to and not against humans.

Report on Ethics of AI:

Peter Paul Verbeek, UNESCO’s Commission for the Ethics of Science and Technology (COMEST), looked into the future that robots and Artificial Intelligence are shaping. He suggested that we:

- expand discussion from the level of individuals to the level of the community.

- move AI from the hype to the reality.

- learn how not only we use AI, but how it is designed in the first place.

He said that there was a need of ethical principles: notion of autonomy, transparency especially regarding the data we feed those systems. We need to ethically align design and build on a transition of value design. How can we ensure cultural diversity, gender diversity and the idea of inclusiveness is kept on the table, and so is the notion of democracy.

We have to know that bad data generates bad AI decision-making. The more data that AI has, the more efficient its decision making. There are numerous privacy issues. Too much data may generate dubious decision making. We need to look at how data defines us and maintain our agencies so we can supervise alogrithms.

Konstantinos Karachalios, Managing Director, IEEE, said that in order to have the AI systems benefit us they need to be ready to predict, plan better and optimize. AI has a huge potential to help humans achieve a level of sufficiency in food and resources, but corporations have their own agendas. We need an inter-governmental agreement on AI. We need to think of the potential of AI for economic growth, for inclusive growth and for the well being of humans, as much as on addressing the social and societal risks of AI at an individual level. It also brings a number of priorities for gov policies in the area of AI.

Nicolas Miailhe, co-Founder and Director, The Future Society, said that ethics unfolds in the reality of everyday practices of people as it emerges from the ground of the digital and global. Therefore, it is a global phenomenon requiring global co-ordination kind of articulating. There is a need to solve for everyone in a way that addresses the universal, yet respects pluralism diversity and the fact that we may not all want the same future in a granular level remains a difficult challenge since the digital revolution is in the concentration of wealth and power.

What we need?

- A council recommendation and in the form of policy observatory at UNESCO.

- Behavioral analytics and sentiment analytics as you are speaking in hiring.

- A Hipocratic oath for people engaged with AI development.

- Discuss what kind of regulations should we put in place, how existing policy silos are doing, and consumer protection to address those issues.

Quotations from different sessions

AI should focus on the most vulnerable. This is where we find common ground across systems. People should focus more on AI and less on autonomous vehicles and their decision making.

– PeterPaul Verbeek

Ethics is not about morals but about social constructs and put social justice and social responsibility in focus.

– Mei Ling

“Technology can be a key that unlocks keyed long term prospects or it could be a curse that deepens inequalities depending on the policy response that is chosen. “

– Mrs Gulden Turkoz-Cosslett

“The information society is not about societies being changed through digital technology, but also the fact that the information society has a responsibility to shape how tech is developed and used. We need to build a systemic understanding & thinking: building trustworthiness frameworks. “

– Norbert Bollow

We need media platforms that are credible and authentic. It’s all about peer to peer communicating from the civic level at the community village level. How can we reinforce civic media, peer-2-peer communication and turn the social media model on its head?

– Leonard Doyle

To view the complete webcast of the Ethical Dimensions of AI and/or other webcasts from the Forum, click here.